What If I’m Wrong?

“We’ll pass you on the defense, but not on the thesis.” The words did not make sense to me. I had barely managed to drag myself over the finish line as it was. Everyone had assured me that I wouldn’t have been allowed to defend my PhD thesis if I wasn’t ready. And now I was looking at semesters, potentially years, of additional work after the defense. I had suspected that something was not right for weeks, but I hadn’t been able to figure out what exactly it was. Now I was angry and confused. What had I done wrong? Had I not proven my case? I feared that additional work would undermine the wonderful story about Cambrian soil ecosystems that I had constructed and in fact, several months later as I looked harder and closer, my perfect story did indeed fall apart. I became even more angry and confused. What did I have now? Just a pile of numbers that didn’t mean anything. The end of my PhD did not go well and became an important defining moment in my life. It took many years and thousands of miles before I reached a new equilibrium and began to process the significance of the event. For many years, I felt only anger and resentment. But then came acceptance and gratitude. Because ultimately, the half-failure was because I was not thinking like a scientist, which was the whole point of a PhD. I failed to ask myself the most important question in the world … “what if I’m wrong?”

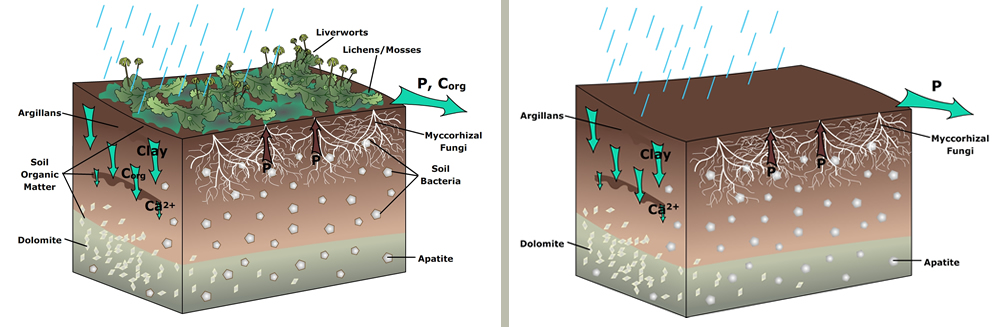

“How do I prove I’m right?” vs. “What if I’m wrong?” On the left was the Cambrian soil ecosystem I proposed based on all observations, no matter the quality, that fed into that story. On the right is what the quality observations actually supported.

How I ultimately became a scientist is not a particularly interesting story, but I’ll tell it anyway. As a child, I liked reading about space and dinosaurs and it became a given that I would grow up to be a scientist. I especially liked the stories of the scientists standing against the world with new ideas that no one wanted to accept, until they were ultimately proven correct. Naturally, I internalized these ideas of a scientist as a lone genius working to transform the world. In reality, however, this is not science at all and that outdated idea of a scientist was never trained out of me. I walked into my PhD defense still fully believing that I had a case to argue and I needed to show and defend enough proof to convince the committee of my story. That idea was difficult to dislodge from my brain, but only when I successfully did so was I able to actually become a scientist. It was not a pleasant experience because I kept asking myself the wrong questions and couldn’t figure out the right ones to ask.

I am not unique in having held this idea of what a scientist is and how the scientific endeavor proceeds. Many people have this incorrect perception of science, including scientists. It is not surprising in a culture that values the underdog and overcoming overwhelming adversity to show that yes, you were indeed right after all. But this also creates a dangerous narrative where it is weakness to be incorrect and to learn from mistakes. Science education can play a role in correcting this misconception, but often doesn’t because of its curricular structure. Science classes prize and reward learning the “truth” and even in historical anecdotes about how the scientific process actually works, many focus on the scientists who ultimately prevailed against the establishment. Lab activities likewise don’t value or reward true experimentation, but grade for and reward competent execution of the correct methodologies. All of these narratives feed into reinforcing a question that most people already ask themselves far too often … “how do I prove that I am right?”

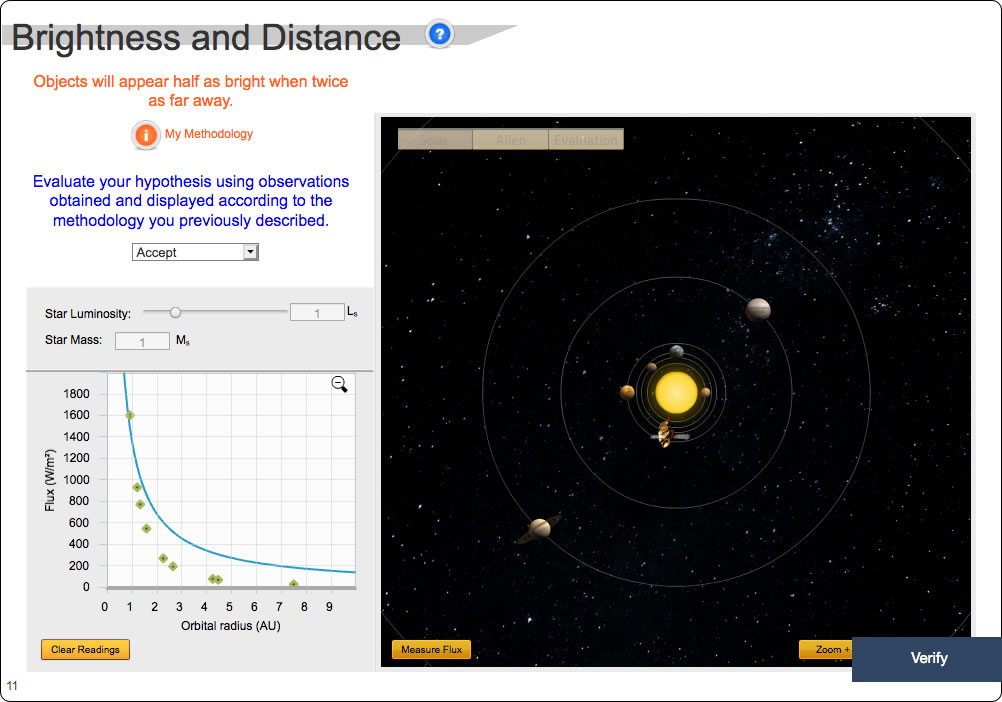

I can see this in the classes that I teach. A question I often ask in Habitable Worlds after students select a proposition to test is whether they should be looking for evidence to prove or disprove the proposition. Unerringly, every semester only about half say they should work to find evidence that disproves their proposition. I doubt most understand why this is the correct answer beyond the notion that they think that’s the way science is supposed to work or more likely guess that the “counterintuitive” answer must be the correct one, otherwise why would I be asking? In early iterations of this activity, the proposition was then converted into a visible graph that students were supposed to compare to what was plotted from their observations. Shockingly to me, students became incredibly frustrated that their observations did not fall along their guess line and kept reporting this as a bug, asking in frustration “how do I make the dots fall on the line?” It did not occur to many of these students that they may have just been wrong. The students kept asking themselves “how do I show that I’m right?” instead of “what if I’m wrong?” It changed their whole approach to the activity and led to so much frustration that I had to redesign the activity to step them through a better process. To me, it was surprising that students refused to concede that they may have made a mistake. But it shouldn’t have been … I used to be that exact student.

Students became frustrated when the observation dots wouldn’t fall along their prediction line. Apparently, the possibility that they had made an incorrect prediction was considered the least likely possibility. The correct relationship is that objects will appear a quarter as bright when twice as far away. (Habitable Worlds v2.0, Fall 2013 semester)

The questions we ask at the onset of an investigation frame how we pursue the investigation. In framing my PhD work around the question “how do I prove that I am right?”, I biased my work towards investigations and procedures that would solidify my case and tended to avoid ones that didn’t add to the narrative I had already built in my head. This resulted in a lot of frustration with observations that didn’t fit, much like it did for my students. If I had realized that the correct question to ask is “what if I’m wrong?”, I would have conducted different analyses that would have identified the weak points in my argument far earlier. The fact that I never caught that, and no one else did either, shows how much of the process of science is assumed to have been picked up somewhere else during science education. Apparently, I should have already known that science worked that way. Where I would have learned that, however, is not clear.

So how do we fix this so that we don’t generate citizens and scientists who don’t truly understand scientific thinking? An important component of science education needs to be the internalization of the question “what if I’m wrong?” Far too few people ask themselves this question, including scientists. This results in a complete inability to address our significant and complex problems, as we make decisions based on how we think the world should work, then get angry and confused that the world didn’t respond the way we expected. This is a problem currently affecting many of our leaders around the world, who live in a state of outright reality-denial. But it also affects experts who double-down on their ideas, even when observations run contrary to what they predicted (The Peculiar Blindness of Experts, The Atlantic June 2019 issue). This all stems from a failure and often unwillingness to ask the question “what if I’m wrong?”

We can start to address this problem in the science classroom across all levels by making “what if I’m wrong?” the central question students engage all year so that the question becomes second-nature. A potential way to do this is to set up an activity very early in the course where students go about “proving a case” that is stacked towards a particular outcome. Then have the students run through the activity again using “what if I’m wrong?” as the framing question, yielding a different conclusion. Examples like this tend to be pretty effective in getting the point across because they force students to confront the limitations of their current thinking and watch it fail. Then, throughout the course, reinforce the question. When students make a statement about how they think the world works, follow up with “what would you expect to find if you’re wrong?” This gets students thinking about observations they could make that would not be expected based on their understanding of the topic, which then opens the door to thinking about the alternative explanations that those observations lead to. This makes for stronger hypothesis testing in a science classroom because the structure of the activity is leading students to better explanations, rather than an ego-deflating “you’re wrong” answer that may result in disengagement by students who already had difficulty picturing themselves as scientists.

Additionally, the science curricula must be modified to make it more exploratory. It does no good to ask students to ask themselves “what if I’m wrong?” and then punish them for being wrong. The learning process consists of two steps, the “learning” part and the “evaluation” part where a teacher inspects whether the learning part did anything. In paper-and-pen classes, the learning part is assumed to happen elsewhere (because it is too difficult to track and inspect) and really, the teacher is only evaluating the results of that process. So every homework, quiz, and exam … all the feedback that a teacher ever gives to a student … is an evaluation. Without commenting on the learning process, the only feedback that a teacher ever gives a student is punishment for being wrong. This is where digital and adaptive systems can help. When properly built, they can capture the entire learning process and give students feedback on how to improve what they’re doing without punishing them for making mistakes while learning. Habitable Worlds is structured this way, where the “learning” components of the course are evaluated without penalty and students can make as many mistakes as they want without losing a single point (although seriously, after 800 tries on a single activity, some students really should learn to ask for help).

Science classrooms are supposed to teach scientific reasoning and critical thinking. All too often, however, they focus on correctly memorizing the known facts and correctly executing known procedures. This results in a conflicting message where our words about how science is exploratory and that mistakes are part of that process are undermined by courses and grading structures that strongly discourage making any mistakes because there will be significant consequences. Modifications of the science classroom by framing every activity around the question “what if I’m wrong?” and guiding students through the application of that question without penalties can help move students towards internalizing that question as the most important one to ask. Internalizing that question is difficult because it requires humility, a quality that is fairly lacking in a society focused on and that rewards certainty and brashness. But asking that question is the only way to true critical thinking and ultimately, a better understanding of our universe so that we can make better decisions. It’s certainly a question I wish I had learned to ask myself far earlier than I did, which would have made my path to a PhD easier. And it’s a question that I am increasingly asking my students to grapple with as often as possible so that they don’t go down the same path.

Notes for Practice

Scientific Thinking

Emphasize the question What if I’m wrong? Scientific inquiry can only truly begin when you become willing to question your own assumptions, rather than focusing on finding evidence to support your pre-conceived notions.

Have students reflect on what they would expect to find if their assumptions are incorrect. Many students are not comfortable with this kind of thinking, so the more practice they can get with it the better.

Create consequence-free exploration zones. If students are to internalize exploring pathways that may potentially be wrong, they can’t be penalized for doing so.